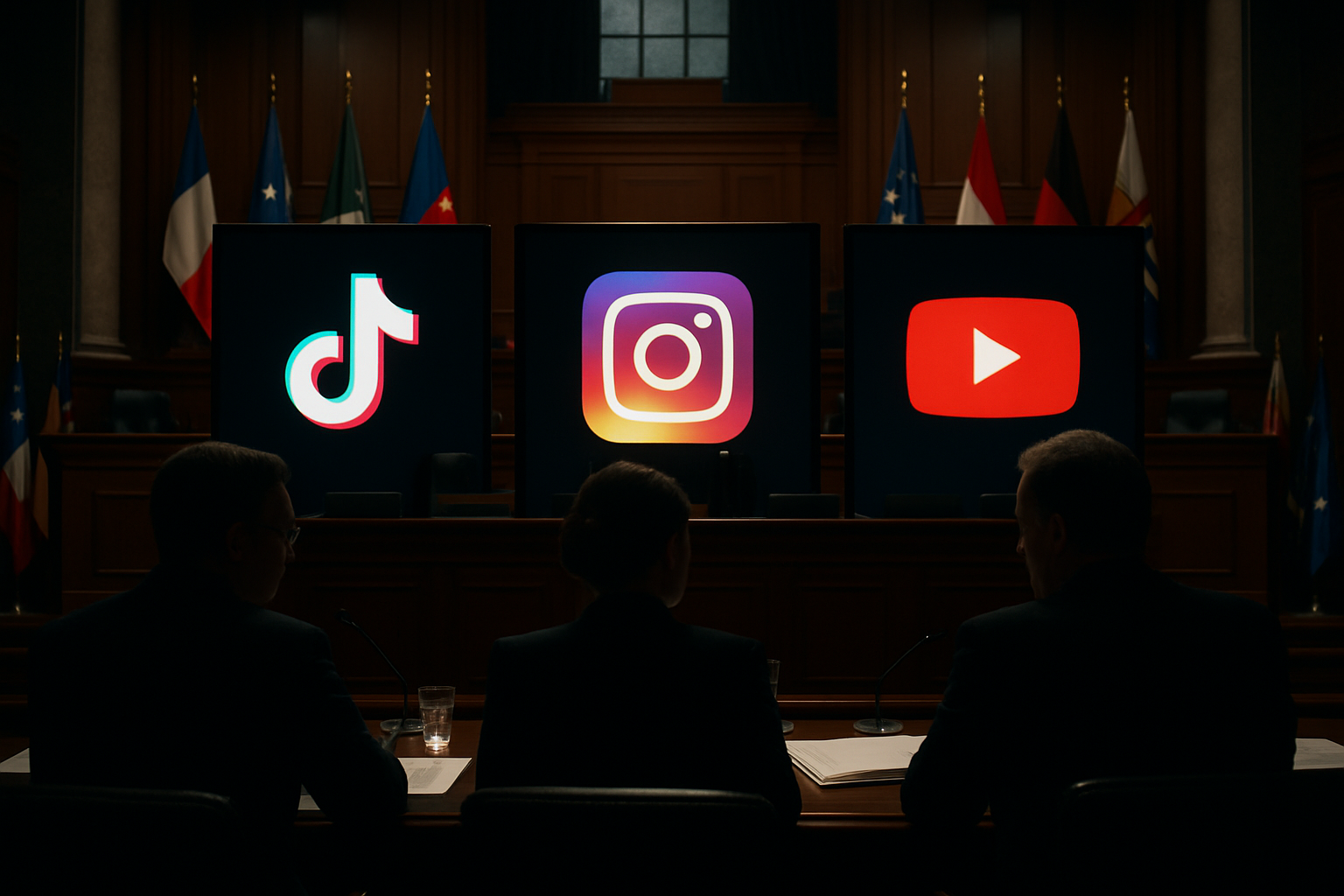

Government authorities across three continents have launched simultaneous investigations and enforcement actions against major social media platforms this week, marking the most coordinated international regulatory response in the platform industry's history. South Korea's media watchdog initiated a probe into Instagram's account deactivation practices, while Portugal and Japan reported on a landmark U.S. trial where Google and Meta vehemently contested addiction allegations.

South Korean Regulatory Action Against Instagram

South Korea's communications watchdog announced Wednesday it has launched an investigation into Instagram's systematic deactivation of user accounts throughout 2025. The probe, conducted by the Korea Communications Commission (KCC), focuses on whether Meta's photo-sharing platform violated user protection regulations when it suspended accounts without adequate explanation or appeal processes.

The investigation represents a significant escalation in South Korea's oversight of global technology platforms, particularly as the country observes the European Union's aggressive regulatory framework that has resulted in billions in potential fines for platforms like TikTok over "addictive design" features.

Industry observers note that South Korea's action comes amid growing international coordination on platform accountability, with the country potentially joining the European movement that now includes Spain's criminal executive liability framework, Greece's age verification systems, and coordinated enforcement across multiple jurisdictions.

Historic U.S. Trial Challenges Tech Giants

Meanwhile, a landmark legal proceeding in the United States has placed Google and Meta under intense scrutiny over allegations that their platforms deliberately create addictive experiences for young users. Both Portuguese and Japanese media reported extensively on the trial's second day, where YouTube's legal team made the extraordinary claim that the Google-owned platform is "not technically social media" in an apparent attempt to escape regulation.

YouTube's defense strategy involves arguing that the platform serves as a video hosting service rather than a social network, despite featuring comment systems, community posts, and algorithm-driven recommendations that mirror traditional social media engagement patterns. The company's lawyers insisted Tuesday that YouTube was not "intentionally addictive," distancing themselves from the broader social media industry's business model criticisms.

Google and Meta's coordinated defense comes as they face mounting evidence of deliberately designing features to maximize user engagement time, particularly among vulnerable younger demographics. The trial has heard testimony about unlimited scrolling, autoplay features, and personalized recommendation algorithms specifically engineered to capture and maintain user attention.

"The platforms have consistently prioritized engagement metrics over user wellbeing, particularly when it comes to children and teenagers."

— Digital Rights Advocate (speaking to international media)

European Regulatory Revolution Provides Context

These enforcement actions occur within the broader context of an unprecedented European regulatory revolution targeting social media platforms. Spain leads with its revolutionary five-point framework including complete under-16 social media prohibition, criminal executive liability creating imprisonment risks for tech leaders, mandatory biometric age verification, and legal definitions of algorithmic manipulation.

The European Commission's investigation into TikTok has found the platform violated the EU Digital Services Act through "addictive design" features including unlimited scrolling, automatic video playback, and personalized recommendations designed to maximize user dependency over wellbeing. TikTok faces potential penalties of up to 6% of its global annual revenue—potentially billions of euros.

Industry resistance has escalated dramatically, with Elon Musk calling Spanish Prime Minister Pedro Sánchez a "fascist totalitarian" and Telegram founder Pavel Durov sending mass alerts to Spanish users warning of a "surveillance state." European officials argue this coordinated opposition demonstrates the urgency of regulatory intervention.

International Coordination Prevents Jurisdiction Shopping

The simultaneous nature of regulatory actions across South Korea, Europe, and legal proceedings in the United States suggests sophisticated international coordination designed to prevent platforms from simply relocating operations to more permissive jurisdictions. This represents a fundamental shift from the traditional approach of isolated national responses to a unified global framework.

Australia's successful implementation of an under-16 social media ban, which eliminated 4.7 million teen accounts since December 2025, has provided a technical feasibility model that other nations are adapting to their specific legal and cultural contexts. The Australian experience demonstrates that aggressive platform regulation is achievable with sufficient government commitment.

Privacy advocates have raised concerns about the biometric age verification systems required for effective enforcement, warning that infrastructure designed for child protection could enable broader government surveillance capabilities. However, supporters argue that existing platform data collection practices already create comprehensive user profiles, and regulation would subject these practices to legal scrutiny rather than expand surveillance.

Criminal Executive Liability Revolution

Perhaps the most significant development is Spain's introduction of criminal executive liability, creating personal legal risks for platform executives beyond traditional corporate penalties. This unprecedented approach could fundamentally alter how technology leaders approach regulatory compliance, transforming violations from business costs into personal imprisonment risks.

The criminal liability framework represents the most aggressive platform regulation in global history, potentially establishing precedents that could trigger worldwide adoption. Success could reshape the relationship between democratic governments and multinational technology corporations, while failure might strengthen industry arguments against government intervention.

Research Supporting Age Restrictions

Scientific evidence supporting the regulatory push continues to accumulate. Dr. Ran Barzilay's research at the University of Pennsylvania demonstrates clear connections between early smartphone exposure and sleep disorders, weight problems, and diminished cognitive abilities among children and adolescents. Children exposed to devices before age 5 show significantly higher rates of sleep disruption and decreased physical activity.

Global statistics reveal that 96% of children aged 10-15 use social media, with 70% experiencing harmful content exposure and over 50% encountering cyberbullying. These figures provide compelling evidence for the age-based restrictions being implemented across multiple jurisdictions.

Industry Technical Challenges

The regulatory push occurs amid a global memory crisis affecting major semiconductor manufacturers Samsung, SK Hynix, and Micron, with sixfold price increases constraining the technical infrastructure needed for sophisticated content moderation and age verification systems. Supply shortages are expected to continue until 2027, when new manufacturing facilities come online.

Platforms face the technical challenge of implementing "real age verification" systems that go beyond simple checkbox confirmations to include biometric or identity document authentication. Cross-border enforcement requires unprecedented international cooperation between regulatory authorities, creating new diplomatic and technical coordination requirements.

Stakes for Democratic Governance

The coordinated regulatory response represents the most significant test of democratic government capabilities to regulate multinational technology platforms in the internet era. The outcome will determine whether criminal executive liability becomes a global standard for platform accountability or whether coordinated industry resistance can successfully challenge government authority.

Success could trigger continental and potentially global adoption of similar frameworks, fundamentally reshaping the balance between technological innovation and child protection in the digital age. Failure might strengthen industry arguments that democratic oversight of global platforms is technically and legally unfeasible.

The international community is monitoring these developments closely, as they will establish precedents affecting millions of families, platform operations worldwide, and the fundamental relationship between democratic institutions and technological power in the 21st century.

Looking Forward

Parliamentary approval is required across participating European nations throughout 2026 for coordinated implementation before year-end. The simultaneous timing is designed to prevent jurisdictional shopping and ensure that platforms cannot simply relocate to avoid regulation.

As governments worldwide grapple with the balance between protecting children and preserving digital rights, these February 2026 developments may prove to be the turning point that determined how democratic societies manage the intersection of technology, childhood development, and governmental authority for decades to come.